This is the second article in the PDA series on Fraud and Artificial Intelligence (AI). In this article we will be taking a closer look at Generative Adversarial Networks (GANs) which are used to create extremely realistic images using AI, and how this can be used by fraudsters.

What are GANs?

In our first article we gave a brief overview of Generative Adversarial Networks (GANs), which are used to create life-like images of people.

There is an incorrectly held belief that GANs are just stitching together different facial features from different photos in a sort of cut and paste way (like you might do on photoshop) – this is not the case at all for GANs. They are generating the image completely from scratch in a similar way to CGI in films – each image is completely generated by computers.

The term ‘adversarial’ in the name Generative Adversarial Network is due to how they work – one ‘network’ (or brain) creates an image at random, and the other network sees if this matches the prompt given, based on what it knows from the training network.

For instance, if the GAN has been asked to create a picture of a car, one network will create random images from scratch whilst the other checks to see if this picture looks like the images labelled ‘car’ in the training data. This is done in steps – network 1 will eventually create something that looks like it has 4 wheels, and network 2 will say that it looks a bit like a car, so continue down that line of processing.

This is a rather simplistic explanation, but the main thing to take away from this is that it is creating images from scratch and that it is completely random.

So how do you use a GAN?

From the user’s point of view, they are simple to get basic results out of, but difficult to get consistent and realistic images out of. There are a number of limitations that we will go through later, but generally speaking it is difficult (although by no means impossible) to get GANs to generate precise ‘actions’ or iterations of exactly the same thing. As an example, see the below – it’s easy to create pictures of a realistic looking red car, but it’s not the same car in each:

To create this, all I needed to do was download the required software to a reasonably powerful computer, load up the software, and type in my request (“photograph of a red car”). You can give more information to the GAN to make it generate something more precise – take the following example of “red car with green wheels”:

Fraudsters use GANs to create completely fictious people for use in application fraud and romance fraud which can match the supporting documentation that they already have. For instance, if you have a passport information page with the headshot, it is relatively easy to create a person that looks similar enough using GAN methodologies.

Here is an example of using a GAN to evolve from a passport photo to a picture of a person. Note that this is by no means the best that can be done, but rather what can be achieved quickly:

Whilst these images may not pass a diligent KYC officer, it only took about 5 minutes to go from a passport to an image of a person which matches the basic form of the original image. With an improved workflow and more practice, it would be possible to create much better results in an even shorter timeframe.

ControlNet

ControlNet is a ‘plugin’ for a certain type of GAN called Diffusion Models. This allows for much greater control and the replication of results with slight differences. As such, this can allow for the creation of images of the same generated person in many different poses, or quickly create many different versions of the same type of image.

Take the following example of a woman. The first one is of her sitting down, but with ControlNet we can now get GAN to create the same (or a very similar) woman standing up.

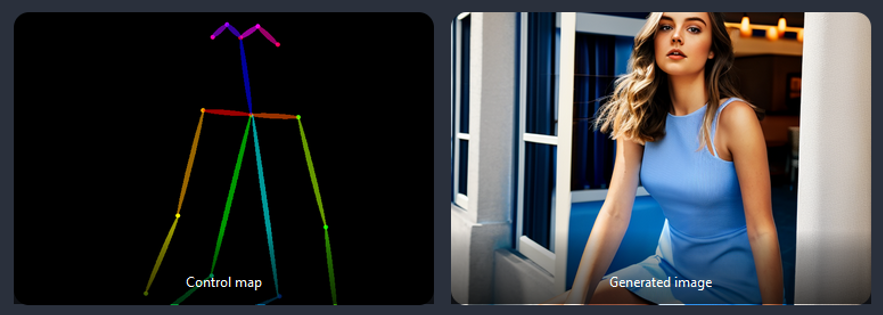

Similarly, ControlNet can be used to pose by drawing a rough outline (as seen on the left), and then a generate an image of the same woman in a different pose.

(https://stable-diffusion-art.com/controlnet/)

Whilst these images aren’t perfect and there is definitely some difference between the generated woman, it is extremely close (so much so that a busy verification or KYC agent might miss it!) and is getting more and more efficient by the day.

What are the limitations?

This is evolving every day –

- GANs often create unrealistic ‘artefacts’ as they do not truly know what is real or not. Take the following picture:

- This is a prime example of artefacts that are introduced whilst using AI, with warping around the wrist and a bit of extra shirt attached. These can be easily edited out (or new images generated until you get outcome without artefacts) but this can be time consuming.

- GANs cannot currently create realistic documents or text – while it is probably only a matter of time, as currently GANs cannot create photos with coherent text in, it means it is not currently possible to create ID documents or utility bills using GAN.

- Getting exact results is difficult and time consuming – it is relatively easy to create a realistic looking face, but significantly harder to create posed variations that look like the same person even with ControlNet

What are the risks?

The core risk of Generative Adversarial Networks revolves around the creation of fake images for use in application fraud. As mentioned earlier, with an effective workflow, a fraudster could use GAN technology to create hundreds of realistic looking portrait pictures which could be used to apply for online applications or romance fraud.

Whilst there are many groups and companies developing GANs, one of the shared development goals is to allow for the creation of a ‘character’ which can be posed, put in different situations and in different clothing. This will make for a significantly increased threat due to the easy creation of whole social media profiles, and characters that can easily respond to challenges.

How can we mitigate those risks?

There are a number of ways to mitigate the risk:

- Digital Document Forensics methodologies will help detect generated images through a number of technical methodologies. More information on these methods can be found here

- Text and documents cannot be generated well. They could be photoshopped on top of the image, but this is difficult to get to look correct. Consider asking for the user to hold up the same passport or document in different positions.

- Most of the training sets of GANs are from professional photos and images – this gives most images a ‘prepared’ look, like they are from a magazine. However, many training sets are being developed from amateur/natural photos which will circumvent this.

- Hands and hair were originally difficult for GANs, however these problems have mostly been overcome. It is still difficult to get them just right, so be aware of ‘poses’ that hide the hands.

In the next article in this series, we will look into Large Language Models (such as ChatGPT) and how they can be used to create convincing, fraudulent documents, and correspondence which can be a risk to both business and individuals.